How Thirsty Is AI?

A case study on the water and energy demands of AI systems, using ChatGPT as a proxy to understand how model growth, data center concentration, and resource use interact.

A case study on the water and energy demands of AI systems, using ChatGPT as a proxy to understand how model growth, data center concentration, and resource use interact.

The Backstory

Artificial intelligence is shaping up to be one of the most consequential frontier technologies of this century, even if it can seem newly arrived. In fact, the first natural language processing program, ELIZA, was developed nearly sixty years ago (Natale 2021). Since then, AI has moved through repeated cycles of hype and disappointment before re-emerging with unusual force at the end of 2022.

ChatGPT's release in November 2022 accelerated that trajectory dramatically. Since then, ChatGPT and a growing range of generative models have reshaped not only Silicon Valley, but also the ways people interact with everyday technologies. At the same time, artificial intelligence is influencing healthcare, customer service, banking, defense, cybersecurity, logistics, and many other sectors.

Why This Matters

That scale of adoption comes with real costs. AI has already begun restructuring the labor market, affecting not only lower-wage workers but also creative fields that were once treated as uniquely human domains. More recently, early reports and projections on AI's impact on natural resources have started to emerge as well.

There is no immediate cause for panic, but there is a strong case for starting this conversation early. Putting the pieces together now gives us a better chance to assess risks and decide what level of environmental strain society is willing to accept.

Scope, Data, and Limits

This case study examines AI's demand for two critical resources: water and energy. It begins with the growth trajectory of AI systems, then turns to water consumption, freshwater availability, energy demand, and the geographic concentration of data centers in the United States. Because it is impossible to assess every new model entering the market, ChatGPT is used here as a proxy for AI systems more broadly. Its scale, visibility, and documented adoption make it a practical entry point for understanding wider infrastructure demand.

The analysis draws on Aterio's data center dataset, U.S. Energy Information Administration rankings, datasets from Our World In Data, statistics published by Intelliarts and Statista, the paper "Making AI Less 'Thirsty'" by Ren et al., and Accenture's report on future U.S. data center power demand. A key limitation remains that technology firms and data center operators do not publish complete energy-use information, so some sections use data center count and concentration as a proxy for AI energy demand.

1. Use of AI

The adoption of generative AI systems like ChatGPT has been astonishing. Within five days of launch in November 2022, ChatGPT reached one million users, a growth rate surpassed only by Threads (Intelliarts 2025). Given the pace of adoption so far, it is reasonable to assume that this technology is not only here to stay but also likely to continue along an aggressive growth trajectory.

Key takeaway: ChatGPT adoption rises extraordinarily fast, which helps explain why even small per-query resource costs scale into much larger infrastructure demands.

2. AI Infrastructure

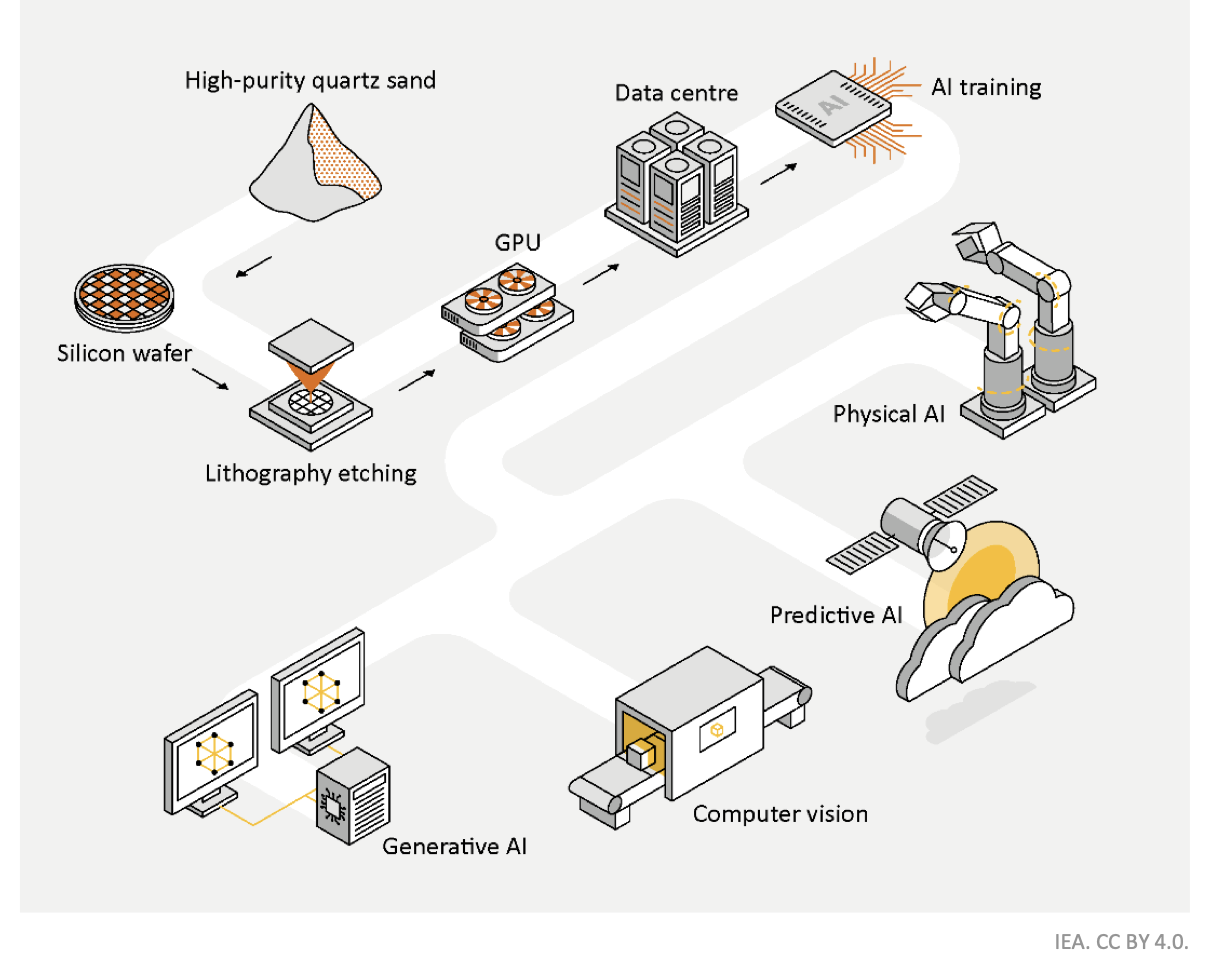

To understand what powers AI systems, it helps to look at the infrastructure beneath them. Critical minerals matter for GPU production, but the core focus here is on data centers, where AI models are trained and deployed. These facilities depend heavily on two resources: water, largely for cooling, and energy, for powering compute-intensive systems.

Among those resource demands, water is one of the least visible and most unevenly distributed.

3. How Thirsty Is AI?

The answer depends heavily on where a data center is located. A recent study compared water use for model training and inference (Li et al. 2025). Inference is the process a model uses to generate a prediction, solve a task, or produce a response after deployment (IBM Research 2023; Cloudflare n.d.).

The differences are stark. Washington state, for example, uses far more water than Texas. One major reason is the energy mix powering local data centers. Hydroelectric systems require large volumes of water to turn turbines, while fossil-fuel generation also uses substantial water for cooling. Wind and solar are much less water-intensive.

Training is extraordinarily water-intensive, but it happens episodically. Inference, by contrast, happens constantly, every time users query a system like ChatGPT.

Key takeaway: Water intensity varies sharply by state, showing that where AI workloads are processed can materially change their resource footprint.

In Washington, ten GPT-3 requests can equal a 500ml bottle of water. In Texas, it takes far more requests to reach the same total. The U.S. average sits between those extremes. Users also rarely know where their requests are actually processed: a request sent from New York could easily be routed to a data center in another state entirely.

States that are water-inefficient for training also tend to be inefficient for inference. States such as Texas, Georgia, and Wyoming perform better on both dimensions. That efficiency divergence becomes especially important later, when the analysis turns to where data centers are actually being built.

Putting Water in Context

Freshwater is already a constrained resource. AI data centers rely on substantial volumes of water for cooling, and although there are efforts to improve efficiency, the current systems still depend on large withdrawals from a limited supply (Garg and Kitsara 2025).

Renewable internal freshwater resource flows, including river flows and groundwater recharge from rainfall, have been declining for decades and are expected to face growing pressure under climate change.

Key takeaway: Freshwater availability has been declining over time, which makes rising AI-related cooling demand more consequential rather than less.

4. Power Hungry AI

Water is only half the story. AI also demands enormous amounts of energy. According to a 2024 report by the U.S. Department of Energy, data centers could account for between 6.7% and 12.0% of total U.S. electricity demand by 2028. Other models project even higher growth, especially for hyperscale facilities (Lawrence Berkeley National Laboratory 2024; Data Center Knowledge 2023).

In the most conservative scenarios, annual energy demand from data centers rises by 4% to 15%. In more aggressive scenarios, the increase reaches 20% to 40%. If that upper-end outcome materializes, households and small businesses may find themselves competing for energy access with large-scale data operations (Accenture 2025).

5. The Data Centers That Power AI's Growth

Because companies disclose little about the direct energy use of specific AI models, data center counts and concentration become one useful way to approximate where AI's infrastructure burden is gathering. Virginia's role in this landscape is widely known, but the concentration is even more pronounced when placed beside state-level energy consumption.

Key takeaway: Data center concentration and high state-level energy use often cluster together, suggesting that AI infrastructure growth is reinforcing pressure on already energy-intensive regions.

The five states hosting the largest number of data centers also consume significantly more energy than the national average. Texas is especially notable, consuming roughly five times more than the average state while hosting hundreds of data centers. California also stands far above the national baseline, while states such as Oregon, Nevada, and Iowa combine large data center footprints with comparatively lower consumption due in part to renewable-heavy energy mixes (U.S. Energy Information Administration 2023).

The top five states account for half of all newly announced and under-construction facilities. Virginia, Georgia, Texas, and Arizona are especially prominent. That pattern returns us to the earlier question about water efficiency. Some of the same states that appear environmentally strained still remain attractive locations for major data center expansion.

Key takeaway: The buildout pipeline is nearly as large as the existing footprint, which signals that current resource pressures are likely to intensify rather than level off.

As of May 2025, there are as many newly announced and under construction facilities as there are already active ones. That is a striking pace of buildout, and it underscores how urgent these questions have become.

Conclusion

Despite the limitations in available data, the patterns point in the same direction: AI systems are expanding quickly, and that expansion requires large and growing quantities of water and energy. Water use spikes during training, but the explosion in daily AI use also means sustained cooling needs for data centers that serve constant inference requests. Energy demand is rising fast enough to place meaningful pressure on electricity systems and prices.

Because the scale of these shifts is so large, it is unrealistic to expect individual users to carry the burden of response on their own. A coordinated policy effort at local, state, and federal levels is needed to govern the environmental and economic consequences of AI infrastructure growth. Individuals can still exercise judgment about how they use these systems and what kinds of regulation they demand, but those choices need institutional support.

As this post was being completed in May 2025, the U.S. House of Representatives passed legislation proposing a ten-year ban on state AI regulation. That development only sharpens the stakes: questions about public-interest governance are arriving at the same moment that the resource footprint of AI is accelerating (Reuters 2025).

References